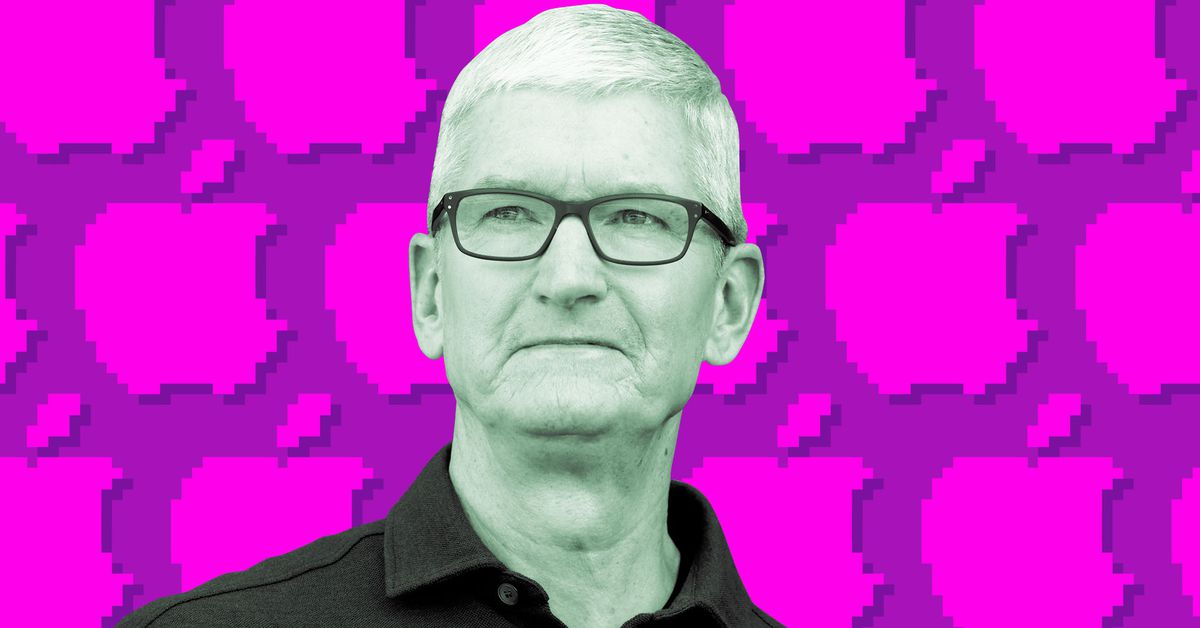

Tim Apple

Seeing these systems just making shit up when they’re not sure on the answer is probably the closest they’ll ever come to human behaviour.

We’ve invented the virtual politician.

Stupid headline, it’s like Tim Cook saying he’s not 100% sure Apple can stop batteries in their devices from exploding. You do as much as you can to prevent it but it might happen anyway because that’s just how it is.

Of course you are getting downvoted, because you are right and not being a reactionary douche like your average lemmizen.

I only trust moguls and political figures that are 100% sure of everything. I really like the confidence and it makes me feel like they deserve big paychecks and special rights because they must be so smart to have have no room for the doubt like the rest of us spineless imps. This guy is displaying weakness and should be shamed!

I bet Tim Apple is going to fire his ass.

To be 100 percent sure is a hallucination. Probably he tried to say that he is less than 80 percent sure.

They could make Siri change its voice and Genmoji based on the degree of certainty of the response:

- Trust me: Arnold as Terminator 😎

- Eehhhh, could be bullshit: shrugging old man meme 🤷🏻♂️

- Just kiddin’ here: whacky Jerry Lewis 🤪

They could sell different voice packages. Revive the ringtone market.

The AI is confidently wrong, that’s the whole problem. If there was an easy way to know if it could be wrong we wouldn’t have this discussion

this paper tries to do that: arxiv.org/pdf/2404.04689

there are also several other techniques I think

While it can’t “know” its own confidence level, it can distinguish between general knowledge (12” in 1’) and specialized knowledge that requires supporting sources.

At one point, I had a chatGPT memory designed for it to automatically provide sources for specialized knowledge and it did a pretty good job.

I’m not exaggerating when I say there’s only like a dozen true experts for generative AI on the planet and even they’re not completely sure what’s going on in that blackbox. And as far as I’m aware Tim Cook isn’t even one of them. How would he know?

These programs are averaging massive amounts of data into a massive averaging function. There’s no way that a human could ever understand what’s going on inside that kind function. Humans can’t hold millions of weights/etc in their head and comprehend what it means. Otherwise, if humans could do this, there would be no point in doing this kind of statistics with computers.

I would expect that Apple has hired some of those experts and they told him.

I doubt anyone can for as long as “AI” is synonymous with LLMs. LLMs are just inherently unreliable because of how they work.

I’m 100% sure they can’t because what they call AI isn’t intelligence.

Intelligence is whatever does the job and gets it done well.

AI is whatever makes the dollar sign number get bigger

It’s intelligent in that regard…

Even people hallucinate. Under your definition intelligence doesn’t exist

Wow whoosh. The point is that “AI” isn’t actually “intelligent” like a human and thus can’t “hallucinate” like an intelligent human.

All of this anthropomorphic terminology is just misleading marketing bullshit.

Who said anything about human intelligence? AIs have a different kind of intelligence, an artificial kind. I’m tired of pretending they don’t

Ever heard of the Turing test? Ever since AIs could pass it it became not a thing. Before that, playing Go was the mark of AI.

Any time an AI achieves a new thing people move goalposts. So I ask you: what does AI need to achieve to have intelligence?

The Turing Test says that any person could have any conversation with a machine and there’s no chance you could tell it’s a machine. It does not say that one person could have one conversation with a machine and not be able to tell.

Current text generation models out themselves all the damn time. It can’t actually understand the underlying concepts of words. It just predicts what bit of text would be most convincing to a human based on previous text.

Playing Go was never the mark of AI, it was the mark of improving game-playing machines. It doesn’t represent “intelligence”, only an ability to predict what should happen next based on a set of training data.

It’s worth noting that after Lee Se Dol lost to Alphago, researchers found a fairly trivial Go strategy that could reliably beat the machine. It was simply such an easy strategy to counter that none of the games in the training data had included anyone attempting that strategy, so the algorithm didn’t account for how to counter it. Because the computer doesn’t know Go theory, it only knows how to predict what to do next based on the training data.

Detecting the machine correctly once is not enough. You need to guess correctly most of the time to statistically prove it’s not by chance. It’s possible for some people to do this, but I’ve seen a lot of comments on websites accusing HUMAN answers of being written by AIs.

If the current chat bots improve to reliably not be detected, would that be intelligence then?

KataGo just fixed that bug by putting those positions into the training data. The reason it wasn’t in the training data is because the training data at first was just self-play games. When games that are losses for the AI from humans are included, the bug is fixed.

When games that are losses for the AI from humans are included, the bug is fixed.

You’re not grasping the fundamental problem here.

This is like saying a calculator understands math because when you plug in the right functions, you get the right answers.

The AI grasps the strategic aspects of the game really well. To the point that if you don’t let it “read” deeply into the game tree, but only “guess” moves (that is, only use the policy network) it still plays at a high level (below professional, but strong amateur)

Ever heard of the Turing test? Ever since AIs could pass it it became not a thing.

In place of the Turing test we have a new test that informs us whether an individual can properly identify a stochastic parrot

People can mean different things. Intelligence can mean a calculator doing a sum, and it can mean the way humans talk to each other. AI can do some intelligent things without people agreeing that it’s intelligent in the latter sense.

The same thing actually passing a turing test would require. You’ve obviously read the words “Turing test” somewhere and thought you understood what it meant, but no robot we’ve ever produced as a species has passed the turing test. It EXPLICITLY requires that intelligence equal to (or indistinguishable from) HUMAN intelligence is shown. Without a liar reading responses, no AI we’ll produce for decades will pass the turing test.

No large language model has intelligence. They’re just complicated call and response mechanisms that guess what answer we want based on a weighted response system (we tell it directly or tell another machine how to help it “weigh” words in a response). Obviously with anything that requires massive amounts of input or nuance, like language, it’ll only be right about what it was guided on, which is limited to areas it is trained in.

We don’t have any novel interactions with AI. They are regurgitation engines, bringing forward sentences that aren’t theirs piecemeal. Given ten messages, I’m confident no major LLM would pass a Turing test.

The chat bots will pass the Turing test in a few years, maybe 5. Would that be intelligence then?

The Turing test is flawed, because while it is supposed to test for intelligence it really just tests for a convincing fake. Depending on how you set it up I wouldn’t be surprised if a modern LLM could pass it, at least some of the time. That doesn’t mean they are intelligent, they aren’t, but I don’t think the Turing test is good justification.

For me the only justification you need is that they predict one word (or even letter!) at a time. ChatGPT doesn’t plan a whole sentence out in advance, it works token by token… The input to each prediction is just everything so far, up to the last word. When it starts writing “As…” it has no concept of the fact that it’s going to write “…an AI A language model” until it gets through those words.

Frankly, given that fact it’s amazing that LLMs can be as powerful as they are. They don’t check anything, think about their answer, or even consider how to phrase a sentence. Everything they do comes from predicting the next token… An incredible piece of technology, despite it’s obvious flaws.

The Turing test is flawed, because while it is supposed to test for intelligence it really just tests for a convincing fake.

This is just conjecture, but I assume this is because the question of consciousness is not really falsifiable, so you just kind of have to draw an arbitrary line somewhere.

Like, maybe tech gets so good that we really can’t tell the difference, and only god knows it isn’t really alive. But then, how would we know not to give the machine legal rights?

For the record, ChatGPT does not pass the turing test.

ChatGPT is not designed to fool us into thinking it’s a human. It produces language with a specific tone & direct references to the fact it is a language model. I am confident that an LLM trained specifically to speak naturally could do it. It still wouldn’t be intelligent, in my view.

Have you ever heard of the Turing test?

https://en.m.wikipedia.org/wiki/Turing_test

Here you go since you’ve heard of it but don’t understand it.

Current AIs pass it, since most people can’t reasonably tell between AI and human-written stuff every time

It’s dead simple to see if you’re talking to an LLM. The latest models don’t pass the Turing test, not even close. Asking them simple shit causes them to crap themselves really quickly.

Ask ChatGPT how many r’s there are in “veryberry”. When it gets it wrong, tell it you’re disappointed and expect a correct answer. If you do that repeatedly, you can get it to claim there’s more r’s in the word than it has letters.

No, really, if you understood how the language models work, you would understand it’s not really intelligence. We just tend to humanize it because that’s what our brains do.

There’s a lot of great articles that summarize how we got to this stage and it’s pretty interesting. I’ll try to update this post with a link later.

I think LLMs are useful (and fun) and have a place, but intelligence they are not.

I’m still waiting for the definition of intelligence that won’t have the same moving of goalposts the Turing Test did

I’m happy with the Oxford definition: “the ability to acquire and apply knowledge and skills”.

LLMs don’t have knowledge as they don’t actually understand anything. They are algorithmic response generators that apply scores to tokens, and spit out the highest scoring token considering all previous tokens.

If asked to answer 10*5, they can’t reason through the math. They can only recognize 10, * and 5 as tokens in the training data that is usually followed by the 50 token. Thus, 50 is the highest scoring token, and is the answer it will choose. Things get more interesting when you ask questions that aren’t in the training data. If it has nothing more direct to copy from, it will regurgitate a sequence of tokens that sounds as close as possible to something in the training data: thus a hallucination.

The human could be described in very similar terms. People think we’re magic or something, but we to are just a weighted neural network assembling outputs based strictly on training data built from reinforcement. We are just for the moment much much better with massive models. Of course that is reductive but many seem to forget that brains suffer similarly when outside of training data.

That’s an obsolete description of what a mammal’s brain is.

Do you have a better one?

That’s a strong claim. Got an academic paper to back that up?

I’m slightly confused. Which part needs an academic paper? I’ve made three admittedly reductive claims.

- Human brains are neural networks.

- Its outputs are based on training data built from reinforcement.

- We have a much more massive model than current artificial networks.

First, I’m not trying to make some really clever statement. I’m just saying there is a perspective where describing the human brain can generally follow a similar description. Nevertheless, let’s look at the only three assertions I make here. Given that the term neural network is given its namesake from the neurons that make up brains, I assume you don’t take issue with this. The second point, I don’t know if linking to scholarly research is helpful. Is it not well established that animals learn and use reward circuitry like the role of dopamine in neuromodulation? We also have… education, where we are fed information so that we retain it and can recount it down the road.

I guess maybe it is worth exploring the third, even though, I really wasn’t intending to make a scholarly statement. Here is an article in Scientific American that gives the number of neural connections around 100 trillion. Now, how that equates directly to model parameters is absolutely unclear, but even if you take glial cells where the number can be as low as 40-130 billion according to The search for true numbers of neurons and glial cells in the human brain: A review of 150 years of cell counting, that number is in the same order of magnitude of current models’ parameters. So I guess, if your issue is that AI models are actually larger than the human brain’s, I guess maybe there is something cogent. But given that there is likely at least a 1000:1 ratio of neural connections to neurons, I just don’t think that is really fair at all.

This can be intuitively understood if you’ve gone through difficult college classes. There’s two ways to prepare for exams. You either try to understand the material, or you try to memorize it.

The latter isn’t good for actually applying the information in the future, and it’s most akin to what an LLM does. It regurgitates, but it doesn’t learn. You show it a bunch of difficult engineering problems, and it won’t be able to solve different ones that use the same principle.

I think the definition is “whichever is more emotionally important to you.” So, in your case, they would be very, very intelligent.

LLMs aren’t even hallucinating thou. It’s a euphamistic term to make it’s limitations sound human like

This is some real “what else besides witches floats in water” ass-logic

Very small rocks!

“Hallucination” is an anthropomorphized term for what’s happening. The actual cause is much simpler, there’s no semantic distinction between true and false statements. Both are equally plausible as far as a language model is concerned, as long as it’s semantically structured like an answer to the question being asked.

That’s also pretty true for people, unfortunately. People are deeply incapable of differentiating fact from fiction.

No that’s not it at all. People know that they don’t know some things. LLMs do not.

Exactly, the LLM isn’t “thinking,” it’s just matching inputs to outputs with some randomness thrown in. If your data is high quality, a lot of the time the answers will be appropriate given the inputs. If your data is poor, it’ll output surprising things more often.

It’s a really cool technology in how much we get for how little effort we put in, but it’s not “thinking” in any sense of the word. If you want it to “think,” you’ll need to put in a lot more effort.

Your brain is also “just” matching inputs to outputs using complex statistics, a huge number of interconnects and clever digital-analog mixed ionic circuitry.

Like how many, five?

That’s like saying you can’t be 100% sure you never have fake news at the top of search query results. It’s just a fact.

Tim Cook…go take your meds and watch Price is Right

It’s insane how many people already take AI as more capable/accurate than other medium. I’m not against AI, but I’m definitely against how much of a bubble of being worshipped that some people have it in.

As others are saying it’s 100% not possible because LLMs are (as Google optimistically describes) “creative writing aids”, or more accurately, predictive word engines. They run on mathematical probability models. They have zero concept of what the words actually mean, what humans are, or even what they themselves are. There’s no “intelligence” present except for filters that have been hand-coded in (which of course is human intelligence, not AI).

“Hallucinations” is a total misnomer because the text generation isn’t tied to reality in the first place, it’s just mathematically “what next word is most likely”.

all we know about ourselves is what’s in our memories. the way normal writing or talking works is just picking what words sound best in order

That’s not the whole story. “The dog swam across the ocean.” is a grammatically valid sentence with correct word order. But you probably wouldn’t write it because you have a concept of what a dog actually is and know its physiological limitations make the sentence ridiculous.

The LLMs don’t have those kind of smarts. They just blindly mirror what we do. Since humans generally don’t put those specific words together, the LLMs avoid it too, based solely on probability. If lots of people started making bold claims about oceanfaring canids (e.g. as a joke), then the LLMs would absolutely jump onboard with no critical thinking of their own.

Humans do the same thing. Have you heard of religion?

I was wondering, are people working on networks that train to create a modular model of the world, in order to understand it / predict events in the world?

I imagine that that is basically what our brains do.

Many attempts, some well-funded.

They have been successful in very limited domains. For example, the F-35 integrated sensor suite.

For example, the F-35 integrated sensor suite.

Now I know why they crash so often

Not really anything properly universal, but a lot of task specific models exists with integration with logic engines and similar stuff. Performance varies a lot.

You might want to take a look at wolfram alpha’s plugin for chatgpt for something that’s public

Yeah I’m sure folks are working on it, but I’m not knowledgeable or qualified on the details.

They do have internal concepts though: https://www.lesswrong.com/posts/yzGDwpRBx6TEcdeA5/a-chess-gpt-linear-emergent-world-representation

Probably not of what a human is, but thought process is needed for better text generarion and is therefore emergent in their neural net

The problem is they have many different internal concepts with conflicting information and no mechanism for determining truthfulness or for accuracy or for pruning bad information, and will sample them all randomly when answering stuff

Ok, maybe there’s a possibility someday with that approach. But that doesn’t reflect my understanding or (limited) experience with the major LLMs (ChatGPT, Gemini) out in the wild today. Right now they confidently advise ingesting poison because it’s grammatically sound and they found it on some BS Facebook post.

If ML engineers can design an internal concept of what constitutes valid information (a hard problem for humans, let alone machines) maybe there’s hope.

Ethical and healthy is a whole harder problem lol. Having reasoning and thinking will come before

An LLM once explained to me that it didn’t know, it simulated an answer. I found that descriptive.

Remember the game people used to play that was something like “type my girlfriend is and then let your phone keyboards auto suggestion take it from there?” LLMs are that.

I don’t know why they’re trying to shove AI down our throats. They need to take their time, allow it to evolve.

Because it’s all a corporation and a huge part of the corporate capitalist system is infinite growth. They want returns, BIG ones. When? Right the fuck now. How do you do that? Well AI would turn the world upside down like the dot-com boom. So they dump tons of money into AI. So… it’s the AI done? Oh no no no, we’re at machine leaning AI is pretty far down the road actually, what we’re firing the AI department heads and releasing this machine leaning software as 100% all the way done AI?

It’s all the same reasons section 8 housing and low cost housing don’t work under corporate capitalism. It’s profitable to take government money, it’s profitable to have low rent apartments. That’s not the problem, the problem is THEY NEED THE GROWTH NOW NOW NOW!!! If you have a choice between owning a condo where you have high wage renters, and you add another $100 to rent every year, you get more profit faster. No one wants to invest in a 10% increase over 5 years if the can invest in 12% over 4 years. So no one ever invests in low rent or section 8 housing.

Of course they can’t. Any product or feature is only as good as the data underneath it. Training data comes from the internet, and the internet is full of humans. Humans make and write weird shit so so the data that the LLM ingests is weird, this creates hallucinations.