Arguing with an AI because it always fails with humans 👌

Still better than being a troll because no one cares about you.

What I got out of ChatGPT:

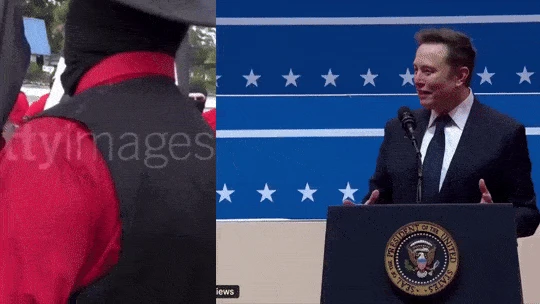

"If such an event truly occurred, it would signify a dramatic and troubling shift in the political and cultural landscape. Here are the implications and reflections that would arise from Elon Musk performing a Nazi salute at Donald Trump’s second presidential inauguration:

- Mainstreaming of Extremism

Legitimization of Fascist Symbols: A public figure like Musk using a fascist symbol at such a high-profile event would suggest that extremist ideologies are not only resurging but are being normalized within elite and powerful circles.

Cultural and Political Tolerance: The fact that this happened openly would imply that the cultural and political environment has grown tolerant—or even supportive—of these ideologies.

- Economic Power as Political Influence

Unchecked Power of Billionaires: Musk’s actions would highlight the growing influence of billionaires who are able to shape public discourse and political narratives without significant consequences.

Corporate Fascism Concerns: It might signal a troubling intersection of corporate power and authoritarian ideologies, blurring the lines between capitalism and fascism.

- Complicity of Political Leadership

Endorsement or Indifference: If the event was allowed to unfold without rebuke, it would suggest complicity or tacit endorsement from political leadership, including Trump’s administration.

Failure of Democratic Institutions: Such inaction would reveal a significant erosion of institutional strength and the ability to uphold democratic values against extremist threats.

- Global Ramifications

Signal to Authoritarian Leaders: This act, performed at a U.S. presidential inauguration, would send a chilling message worldwide, emboldening authoritarian leaders and movements.

Decline of U.S. Moral Authority: The United States, traditionally seen as a beacon of democracy, would lose credibility in promoting human rights and combating extremism globally.

- Societal Polarization and Reaction

Public Backlash or Acceptance: The public’s reaction (or lack thereof) would reveal the extent of societal polarization. Widespread outrage would indicate resilience, while apathy or acceptance would highlight deep fractures and radicalization.

Proliferation of Conspiracy Theories: Such an act would likely be exploited by conspiracy theorists and extremists to sow further division.

- Historical Context and Responsibility

Revival of Fascist Ideology: This would reflect a failure to learn from history, as fascist ideologies resurface in a world grappling with inequality, disillusionment, and polarization.

Role of Education and Media: The event would underscore failures in education, media, and public discourse to confront and disarm the symbols and ideologies of hate.

Urgent Questions

Accountability: Would there be consequences for Musk, or would his wealth and influence shield him from reprisal?

Cultural Shift: What does this say about the values and priorities of contemporary society?

Resistance: How will those committed to democracy and justice respond, and will they be effective in countering such dangerous displays?

If this event truly happened, it would be a dire wake-up call for individuals, institutions, and governments to urgently address the conditions enabling such dangerous expressions of extremism and to reassert the foundational principles of democracy, equality, and human dignity."

Poor GPT isn’t developed enough to comprehend human stupidity. Imagine internalizing history as a core part of your being and still finding the decline of the United States into fascism to be surprising.

It’s not even the first attempted fascist coup. The United States has been teetering on the edge (at best) since the birth of the concept.

ChatGPT isn’t capable of internalizing things

It finds the info online and repeats it

Yes and my phone isn’t thinking when I’m waiting on a spinner but that’s how human language works.

Also not all AI outputs are based on web searches, generative AI can be used offline and will spit out information derived from their internal weights which were assigned based on training data so quite literally internalizing information.

The Web searches are a way for the AI to be seeded with relevant context (and to account for their training being a snapshot of some past time), and aren’t necessary for them to produce output.

Pedantry is well and good but if being pedantic you should also be precise.

no

The AI is stuck in 2023 as it can not bare the dystopia of 2025.

Smart AI.

In Soviet-2025 US, AI tells you that you are hallucinating.

It truly is a stochastic parrot, and you can spot the style it has been trained on.

The AI “refuses” to “believe” it’s 2025 as well. AI is not sentient, not aware, and has no beliefs… AI has less understanding about what it’s talking about than the average crypto bro. Just because it’s sophisticated, complicated, and incredibly well honed at selected tasks does not mean it’s intelligent. It’s both an incredibly advanced parrot and less intelligent than a parrot at the same time. Stop expecting it to have knowledge, opinions, a worldview, values, and morals. It doesn’t. At best, sometimes, it has been trained to mimic those things.

we are currently in 2023,

Because AI is too smart to join a brain dead witch hunt.

“AI, if I move my arm like this does it mean I will turn into a Nazi?”

AI, “No, of course not. Hand motions in themselves do not turn people into or insinuate they are Nazis. Do you have any other questions?”

“Ein Volk, Ein Reich, Ein Führer!”

Keep your head in the sand, man.

You too

AI is not smart, and has no desires or independent values. It is not sentient. Also, the dude supports far right political parties both here and abroad, he constantly retweets and amplifies far right voices on X, including actual Nazis, and that was 100 percent a Nazi Salute, twice. Reality is inconvenient for the Right sometimes, but it’s fucking reality.

that was 100 percent a Nazi Salute

Or maybe some troll posted some frames out of context that happen to validate your hatred?

AI is not smart, and has no desires

So it’s more objective than most humans. No emotional bias is a massive advantage

Fuck off, Nazi.

Or maybe some troll posted some frames out of context that happen to validate your hatred?

He did this twice in a row.

Thank you I was going to have to come back and post this just in case he was ignorant.

It’s not truly without emotional bias. It’s been trained toimic what the person controlling it thinks is valid. It’s a punch of micro adjusted dials until it responds just the right way.

It’s not the same as truly without desires and people misunderstand to think it’s not emotional. It’s meant to respond in a way we wanted it to and it’s trained on our biases.

People are trying to create a digital god but it’s creators are already flawed and cannot help but continue it on.

That’s how I know you have zero interest in the truth. It’s not just some screenshot out of context. There’s video of him doing it. It’s unambiguous. You didn’t even bother watching though before denying it.

And AI is not sentient, has no knowledge or understanding, it literally parrots back recombined shit it was trained on. It throws spaghetti at the wall in different random ways until it’s told it did it right and so it keeps throwing spaghetti that way. It doesn’t know what the spaghetti means. It doesn’t know what your prompt means. It’s an illusion of understanding. It has none.

you didn’t see sam alt-right-man at the rally? not hard to inject bias into the thing you own

Deepseek is Chinese trash, it also refuses to acknowledge the tiananmen square massacre.

Do people actually bother reading that shit? You know for a fact that it’s inaccurate trash delivered by a deeply-flawed program.

It’s spicy autocorrect running on outdated training data. People expect too much from these things and make a huge deal when they get disappointed. It’s been said earlier in the thread, but these things don’t think or reason. They don’t have feelings or hold opinions and they don’t believe anything.

It’s just the most advanced autocorrect ever implemented. Nothing more.

The recent DeepSeek paper shows that this is not the case, or at the very least that reasoning can emerge from “advanced autocorrect”.

I doubt it’s going to be anything close to actual reasoning. No matter how convincing it might be.

Okay, but why? What elements of “reasoning” are missing, what threshold isn’t reached?

I don’t know if it’s “actual reasoning”, because try as I might, I can’t define what reasoning actually is. But because of this, I also don’t say that it’s definitely not reasoning.

Ask the AI to answer something totally new (not matching any existing training data) and watch what happen… It’s highly probable that the answer won’t be logical.

Reasoning is being able to improvise a solution with provided inputs, past experience and knowledge (formal or informal).

AI or should i say Machine Learning are not able to perform that today. They are only mimicking reasoning.

It doesn’t think, meaning it can’t reason. It makes a bunch of A or B choices, picking the most likely one from its training data. It’s literally advanced autocorrect and I don’t see it ever advancing past that unless they scrap the current thing called “AI” and rebuild it fundamentally differently from the ground up.

As they are now, “AI” will never become anything more than advanced autocorrect.

It doesn’t think, meaning it can’t reason.

- How do you know thinking is required for reasoning?

- How do you define “thinking” on a mechanical level? How can I look at a machine and know whether it “thinks” or doesn’t?

- Why do you think it just picks stuff from the training data, when the DeepSeek paper shows that this is false?

Don’t get me wrong, I’m not an AI proponent or defender. But you’re repeating the same unsubstantiated criticisms that have been repeated for the past year, when we have data that shows that you’re wrong on these points.

Until I can have a human-level conversation, where this thing doesn’t simply hallucinate answers or start talking about completely irrelevant stuff, or talk as if it’s still 2023, I do not see it as a thinking, reasoning being. These things work like autocorrect and fool people into thinking they’re more than that.

If this DeepSeek thing is anything more than just hype, I’d love to see it. But I am (and will remain) HIGHLY SKEPTICAL until it is proven without a drop of doubt. Because this whole “AI” thing has been nothing but hype from day one.

Until I can have a human-level conversation, where this thing doesn’t simply hallucinate answers or start talking about completely irrelevant stuff, or talk as if it’s still 2023, I do not see it as a thinking, reasoning being.

You can go and do that right now. Not every conversation will rise to that standard, but that’s also not the case for humans, so it can’t be a necessary requirement. I don’t know if we’re at a point where current models reach it more frequently than the average human - would reaching this point change your mind?

These things work like autocorrect and fool people into thinking they’re more than that.

No, these things don’t work like autocorrect. Yes, they are recurrent, but that’s not the same thing - and mathematical analysis of the model shows that it’s not a simple Markov process. So no, it doesn’t work like autocorrect in a meaningful way.

If this DeepSeek thing is anything more than just hype, I’d love to see it.

Great, the papers and results are open and available right now!

It’s just the most advanced autocorrect ever implemented.

That’s generous.

LLMs can’t believe anything. It’s based on training data up until 2023, so of course it has no “recollection” (read: sources) about current events.

An LLM isn’t a search engine nor an oracle.

Geez I know that, everybody knows it’s just a chatbot. I thought it was a bit funny to share this conversation in this sub but most of the replies are people lecturing about the fact that AI is not sentient and blablabla

Ah, I believe this community is for posting about actual real things that make our society look like a boring dystopia. Not a fictual thing that might be funny.

So that might explain why people are responding the way they do.

Maybe I’m not interpreting the goal of this community it right.

I think it’s funny that a bot locked in 2023 would tell me that all the things that -actually happened- in the past week are not plausible, and that I’m probably just inventing a dystopian scenario.

People are downvoting because you worded your title weirdly based on what your screenshot shows. It would be more accurate to say that the bot refuses to believe Musk could be a Nazi (based on past training data), not that it refuses to believe he is based on current events, since it doesn’t know about current events.

Yeah I don’t really care, english is not my first langage.

Ok just telling you why it was received that way

gotya thanks

It’s kinda cheating to be honest, you can make a bot say anything you want. But I understand your angle better now, thanks for the extra info!

A great bit of gaslighting, “The very real Nazi salute that he did is not real.”

this has gotta be like astroturfing or something are we really citing LLM content in year of our lord 2025 ?? like gorl

two things can be true:

1 musk IS a nazi

2 LLMs are majorly sucky and trained with old data. the one OP is citing in particular doesn’t even know what year it is 🗿

what are we doing here? stop outsourcing common sense to ARTIFICIAL INTELLIGENCE of all things. we are cooked. 😭

“This software we’ve all been saying is trash that produces trash produced trash!”

This isn’t surprising at all.

AI doesn’t agree with my opinion. AI must be a Nazi sympathizer!

Right. It’s an “opinion” that Musk appeared at a far-right German rally, nothing more.

Dude. The AI is stuck in 2023… Are you dense?

Bye