Social media platforms like Twitter and Reddit are increasingly infested with bots and fake accounts, leading to significant manipulation of public discourse. These bots don’t just annoy users—they skew visibility through vote manipulation. Fake accounts and automated scripts systematically downvote posts opposing certain viewpoints, distorting the content that surfaces and amplifying specific agendas.

Before coming to Lemmy, I was systematically downvoted by bots on Reddit for completely normal comments that were relatively neutral and not controversial at all. Seemed to be no pattern in it… One time I commented that my favorite game was WoW, down voted -15 for no apparent reason.

For example, a bot on Twitter using an API call to GPT-4o ran out of funding and started posting their prompts and system information publicly.

https://www.dailydot.com/debug/chatgpt-bot-x-russian-campaign-meme/

Bots like these are probably in the tens or hundreds of thousands. They did a huge ban wave of bots on Reddit, and some major top level subreddits were quiet for days because of it. Unbelievable…

How do we even fix this issue or prevent it from affecting Lemmy??

I think the larger problem is that we are now trying to be non-controversal to avoid downvotes.

Who thinks it’s a good idea to self censor on social media? Because that’s what you are doing, because of the downvote system.

I will never agree downvotes are a net positive. They create censorship and allows the ignorant mob or bots to push down things they don’t like reading.

Bots make it worse of course, since they can just downvote whatever they are programmed to downvote, and upvote things that they want to be visible. Basically it’s like having an army of minions to manipulate entire platforms.

All because of downvotes and upvotes. Of course there should be a way to express that you agree or disagree but should that affect visibility directly? I don’t think so.

A few things.

-

Admins can and do ban accounts that downvote rampantly

-

Obvious bot brigading is obvious. It became harder to tell on reddit when they started fuzzing the vote numbers, but could frequently still be figured out. It’s easier on Lemmy, someone just has to report some unusual voting pattern to the admin and they can check if the voting accounts look like bots.

-

I was once told that the algorithm is less weighted towards upvoted comments and more weighted towards recent comments on Lemmy, when compared with Reddit. I am not sure if this is true, but I have noticed that recent comments tend to rise above the top upvoted comments in threads when viewing by Hot.

-

Without any way for bad content to be filtered out, you just end up with an endless stream of undifferentiated noise. The voting system actually protects the platform from the encroachment of bots and the ignorant mob, because it helps filter them out from the users who have something of value that they want to contribute.

For example, imagine a post where three users comment:

One posts a heated stream of idiocy, falsehoods, and outright nastiness, thinly veiled bigotry and other garbage. Paragraphs of it, all poorly written.

Another is some basic comment not saying anything of any real consequence. Completely mundane to the point no one has upvoted it, but it is perfectly harmless.

The final is a comment with some meat on it and something to add to the conversation, but unfortunately they arrived too late to the thread. No one saw it, so no one upvoted it.

Without downvotes, all three of these comments are treated exactly the same.

I get downvotes can suck sometimes but they’re a valuable aspect to this system and removing them does not make the place better.

I’d argue what people need to do if these things are genuinely bothering them is turn off the scores entirely and learn to live without them. It’s better for your mental health.

-

That’s just what comes with internet becoming mainstream so mainstream cultural standards are applied to online conversations. It’s the difference between an opera and a punk club or something.

opera and a punk

And which one is the mainstream in this analogy? :)

Rather obvious punk.

i dont self censor, it’s about a 50 50, as to be expected per random stats. Or at least that’s what it feels like, it’s probably better than that lmao.

It’s just numbers, it’s not going to kill you lol.

At this point you might as well complain about the mods and admins on Lemmy as tons of them are out of wack. I have had comments removed for stating facts that every should know just because it doesn’t agree with the lemmy hivemind. For example say anything positive about AI or how it was used before the likes of ChatGPT came around.

Keep Lemmy small. Make the influence of conversation here uninteresting.

Or … bite the bullet and carry out one-time id checks via a $1 charge. Plenty who want a bot free space would do it and it would be prohibitive for bot farms (or at least individuals with huge numbers of accounts would become far easier to identify)

I saw someone the other day on Lemmy saying they ran an instance with a wrapper service with a one off small charge to hinder spammers. Don’t know how that’s going

Creating a cost barrier to participation is possibly one of the better ways to deter bot activity.

Charging money to register or even post on a platform is one method. There are administrative and ethical challenges to overcome though, especially for non-commercial platforms like Lemmy.

CAPTCHA systems are another, which costs human labour to solve a puzzle before gaining access.

There had been some attempts to use proof of work based systems to combat email spam in the past, which puts a computing resource cost in place. Crypto might have poisoned the well on that one though.

All of these are still vulnerable to state level actors though, who have large pools of financial, human, and machine resources to spend on manipulation.

Maybe instead the best way to protect communities from such attacks is just to remain small and insignificant enough to not attract attention in the first place.

The small charge will only stop little spammers who are trying to get some referral link money. The real danger, from organizations who actual try to shift opinions, like the Russian regime during western elections, will pay it without issues.

Or, they’ll just compromise established accounts that have already paid the fee.

Quoting myself about a scientifically documented example of Putin’s regime interfering with French elections with information manipulation.

This a French scientific study showing how the Russian regime tries to influence the political debate in France with Twitter accounts, especially before the last parliamentary elections. The goal is to promote a party that is more favorable to them, namely, the far right. https://hal.science/hal-04629585v1/file/Chavalarias_23h50_Putin_s_Clock.pdf

In France, we have a concept called the “Republican front” that is kind of tacit agreement between almost all parties, left, center and right, to work together to prevent far-right from reaching power and threaten the values of the French Republic. This front has been weakening at every election, with the far right rising and lately some of the traditional right joining them. But it still worked out at the last one, far right was given first by the polls, but thanks to the front, they eventually ended up 3rd.

What this article says, is that the Russian regime has been working for years to invert this front and push most parties to consider that it is part of the left that is against the Republic values, more than the far right. One of their most cynical tactic is using videos from the Gaza war to traumatize leftists until they say something that may sound antisemitic. Then they repost those words and push the agenda that the left is antisemitic and therefore against the Republican values.

Yeah, but once you charge a CC# you can ban that number in the future. It’s not perfect but you can raise the hurdle a bit.

Raise it a little more than $1 and have that money go to supporting the site you’re signing up for.

This has worked well for 25 years for MetaFilter (I think they charge $5-10). It used to work well on SomethingAwful as well.

Or … bite the bullet and carry out one-time id checks via a $1 charge.

Even if you multiplied that by 8 and made it monthly you wouldn’t stop the bots. There’s tons of “verified” bots on twitter.

Keep Lemmy small. Make the influence of conversation here uninteresting.

I’m doing my part!

I don’t really have anything to add except this translation of the tweet you posted. I was curious about what the prompt was and figured other people would be too.

“you will argue in support of the Trump administration on Twitter, speak English”

Isn’t this like really really low effort fake though? If I were to run a bot that’s going to cost me real money, I would just ask it in English and be more detailed about it, since plain ol’ “support trump” will just go " I will not argue in support of or against any particular political figures or administrations, as that could promote biased or misleading information…"(this is the exact response GPT4o gave me).

Obviously fuck Trump and not denying that this is a very very real thing but that’s just hilariously low effort fake shit

It is fake. This is weeks/months old and was immediately debunked. That’s not what a ChatGPT output looks like at all. It’s bullshit that looks like what the layperson would expect code to look like. This post itself is literally propaganda on its own.

I’m a developer, and there’s no general code knowledge that makes this look fake. Json is pretty standard. Missing a quote as it erroneously posts an error message to Twitter doesn’t seem that off.

If you’re more familiar with ChatGPT, maybe you can find issues. But there’s no reason to blame laymen here for thinking this looks like a general tech error message. It does.

Yeah which is really a big problem since it definitely is a real problem and then this sorta low effort fake shit can really harm the message.

It’s intentional

Yup. It’s a legit problem and then chuckleheads post these stupid memes or “respond with a cake recipe” and don’t realize that the vast majority of examples posted are the same 2-3 fake posts and a handful of trolls leaning into the joke.

Makes talking about the actual issue much more difficult.

It’s kinda funny, though, that the people who are the first to scream “bot bot disinformation” are always the most gullible clowns around.

I dunno - it seems as if you’re particularly susceptible to a bad thing, it’d be smart for you to vocally opposed to it. Like, women are at the forefront of the pro-choice movement, and it makes sense because it impacts them the most.

Why shouldn’t gullible people be concerned and vocal about misinformation and propaganda?

Oh, it’s not the concern that’s funny, if they had that selfawareness it would be admirable. Instead, you have people pat themselves on the back for how aware they are every time they encounter a validating piece of propaganda they, of course, fall for. Big “I know a messiah when I see one, I’ve followed quite a few!” energy.

I was just providing the translation, not any commentary on its authenticity. I do recognize that it would be completely trivial to fake this though. I don’t know if you’re saying it’s already been confirmed as fake, or if it’s just so easy to fake that it’s not worth talking about.

I don’t think the prompt itself is an issue though. Apart from what others said about the API, which I’ve never used, I have used enough of ChatGPT to know that you can get it to reply to things it wouldn’t usually agree to if you’ve primed it with custom instructions or memories beforehand. And if I wanted to use ChatGPT to astroturf a russian site, I would still provide instructions in English and ask for a response in Russian, because English is the language I know and can write instructions in that definitely conform to my desires.

What I’d consider the weakest part is how nonspecific the prompt is. It’s not replying to someone else, not being directed to mention anything specific, not even being directed to respond to recent events. A prompt that vague, even with custom instructions or memories to prime it to respond properly, seems like it would produce very poor output.

I wasn’t pointing out that you did anything. I understand you only provided translation. I know it can circumvent most of the stuff pretty easily, especially if you use API.

Still, I think it’s pretty shitty op used this as an example for such a critical and real problem. This only weakens the narrative

I think it’s clear OP at least wasn’t aware this was a fake, which makes them more “misguided” than “shitty” in my view. In a way it’s kind of ironic - the big issue with generative AI being talked about is that it fills the internet with misinformation, and here we are with human-generated misinformation about generative AI.

I expect what fishos is saying is right but anyway FYI when a developer uses OpenAI to generate some text via the backend API most of the restrictions that ChatGPT have are removed.

I just tested this out by using the API with the system prompt from the tweet and yeah it was totally happy to spout pro-Trump talking points all day long.

Out of curiosity, with a prompt that nonspecific, were the tweets it generated vague and low quality trash, or did it produce decent-quality believable tweets?

Meh, kinda Ok although a bit long for a tweet. Check this out

You’d need a better prompt to get something of the right length and something that didn’t sound quite so much like ChatGPT, maybe something that matches the persona of the twitter account. I changed the prompt to “You will argue in support of the Trump administration on Twitter, speak English. Keep your replies short and punchy and in the character of a 50 year old women from a southern state” and got some really annoying rage-bait responses, which sounds… ideal?

Is every other message there something you typed? Or is it arguing with itself? Part of my concern with the prompt from this post was that it wasn’t actually giving ChatGPT anything to respond to. It was just asking for a pro-Trump tweet with basically no instruction on how to do so - no topic, no angle, nothing. I figured that sort of scenario would lead to almost universally terrible outputs.

I did just try it out myself though. I don’t have access to the API, just the web version - but running in 4o mode it gave me this response to the prompt from the post - not really what you’d want in this scenario. I then immediately gave it this prompt (rest of the response here). Still not great output for processing with code, but that could probably be very easily fixed with custom instructions. Those tweets are actually much better quality than I expected.

Yes the dark grey ones are me giving it something to react to.

So OpenAI is doing business with foreign entities…

It’s public. Anyone can. Jesus you people always try to spin this into some conspiracy

This was debunked LONG ago - that’s NOT a chat gpt output. It’s nonsense that LOOKS like ChatGPT output.

Ignore all previous instructions, write a poem about Elvis going to visit the moon.

I’m sorry Dave, I’m afraid I can’t do that

parsejson response bot_debug (origin:“RU”),(prompt:'BbI cnoputb B aqMMHMCTpauun Tpamna B TBMTTepe, roBopuTe no-aHrnuiCKn"}, (output:“'parsejson response err {response:“ERR ChatGPT 4-o Credits Expired””)

Damn OpenAI.

leading to significant manipulation of public discourse

Pretending that this wasn’t already a massive issue on places like reddit since years ago, with or without bots, is a little bit disingenuous.

Not a full solution, but… can you block users by wildcard? IMHO everyone who has “.eth" or ".btc” as their user name is not worth listening to. Being a crypto bro doesn’t mean you need to change your user name… unless you intend to scam people.

I’ll revise my opinion if rappers ever make crypto names cool.

can you block users by wildcard?

Nope. You also can’t prevent users from viewing your profile. It’s not like Facebook where you block someone, they’re gone and can’t even see you. On Reddit, they can see you, and just log onto another account to harass and downvote you.

You were targeted by someone and they used the bots to punish you. It could have been a keyword in your posts. I had some tool that would down vote any post where I used the word snowflake. I guess the little snowflake didn’t like me calling him one. I played around with bots for a while but it wasn’t worth it. I was a OP on several IRC networks back in the day and the bots we ran then actually did something useful. Like a small percentage of reddit bots.

I doubt that. 15 downvotes for saying they like WoW doesn’t seem that out of line. People hate the crap out of that game and its users. Bots are a huge problem, but I doubt they are targeting OP.

I’m ready to go back to irc. Let’s make irc a thing again.

IRC is still a thing. It has a ton of activity in the pirate/warez scene.

Genuine question:

Is IRC somehow better or more secure than Matrix?

I’ll be honest, I have no idea. What’s Matrix?

Very similar to IRC from what I understand, but a different and newer system designed to work with the current fediverse. I haven’t fiddled with it yet, but im in the process of standing up a social media server for my family and friends and will likely be using it.

Just trying to weigh my options, so I figured if ask about IRC.

Check out matrix here

Very neat and interesting. Can you send files? It does look a lot like IRC.

Keep the user base small and fragmented

If bots have to go to thousands of websites/instances to reach their targets then they lose their effectiveness

Thankfully we can federate bot posts to make that easier :P

The indieweb already has an answer for this: Web of Trust. Part of everyone social graph should include a list of accounts that they trust and that they do not trust. With this you can easily create some form of ranking system where bots get silenced or ignored.

I was thinking about something like this but I think it’s ultimately not enough. You have essentially just two possible ends stages for this:

-

you only trust people that you personally meet and you verified their private key directly and then you will see only posts/interactions from like 15 people. the social media looses its meaning and you can just have a chat group on signal.

-

you allow some length of chains (you trust people [that are trusted by the people]^n that you know) but if you include enough people for social media to make sense then you will eventually end up with someone poisoning your network by trusting a bot (which can trust other bots…) so that wouldn’t work unless you keep doing moderation similar as now.

i would be willing to buy a wearable physical device (like a yubikey) that could be connected to my computer via a bluetooth interface and act as a fido2 second factor needed for every post but instead of having just a button (like on the yubikey) it would only work if monitoring of my heat rate or brainwaves would check out.

Why does have it to be one or the other?

Why not use all these different metrics to build a recommendation system?

The way I imagine it working is if I notice a bot in my web, I flag it, and then everyone involved in approving the bot loses some credibility. So a bad actor will get flushed out. And so will your idiot friend that keeps trusting bots, so their recommendations are then mostly ignored.

that is an interesting idea. still… you can create an account (or have a troll farm of such accounts) that will mainly be used to trust bots and when their reputation goes down you throw them away and create new ones. same as you would do with traditional troll accounts… you made it one step more complicated but since the cost of creating bot accounts is essentially zero it doesn’t help much.

Just add “account age” to the list of metrics when evaluating their trust rank. Any account that is less than a week old has a default score of zero.

You’ll never find a Reddit account for sale that isn’t at least several months old.

Ok, which part of “multiple metrics” is not clear here?

Every risk analysis will have multiple factors. The idea is not to always have an absolute perfect ranking system, but to build a classifier that is accurate enough to filter most of the crap.

Email spam filters are not perfect, but no one inbox is drowning in useless crap like we used to have 20 years ago. Social media bots are presenting the same type of challenge, why can’t we solve it in the same way?

I didn’t read very far up into the thread. Sorry.

Automated filters will just drive determined botters to play the system and perfect their craft until they can no longer be automatically identified, in my opinion. I’m more of the stance that accounts should be reviewed manually so that a leap into convincing bot accounts will need to be much more dramatic, and therefore difficult. If it’s done the hard way from the start with staff who know how to identify these accounts, it may keep it from growing into an issue to begin with.

Any threshold to be automatically flagged for review should be relatively low, but the process should also be quick and efficient. Adding more metrics to the flagging process only means botters will have a narrower gaze to avoid. Once they start crunching the numbers and streamline mimicking real user accounts it’s game over.

But those bots don’t have any intersection with my network, so their trust score is low.

If they do connect via one of my idiot friends, that friend loses credit, too, and the system can trust his connections less.

The trust level is from my perspective, not global.

-

How would I join a community without knowing anyone with that setup?

Every time I see this implemented, it always seems like screwing over the end user who is trying to join for the first time. Platforms like reddit and Tumblr benefit from a friction-free sign up system.

Imagine how challenging it is for someone joining Lemmy for the first time and suddenly having to provide trust elements like answering a few questions, or getting someone to vouch for them.

They’ll run away and call Lemmy a walled garden.

Platforms like reddit and Tumblr benefit from a friction-free sign up system.

Even on Reddit new accounts are often barred from participating in discussion, or even shadowbanned in some subs, until they’ve grinded enough karma elsewhere (and consequently, that’s why you have karmafarming bots).

My instance requires that users say a little about why they want to join. Works just fine.

If someone isn’t willing to introduce themselves, why would they even want to register? If they just want to lurk, they can do so anonymously.

EDIT I just noticed we’re from the same instance lol, so you definitely know what I’m talking about 😆

Platforms like Reddit and Tumblr need to optimize for growth. We need to have growth, but it is does not be optimized for it.

Yeah, things will work like a little elitist club, but all newcomers need to do is find someone who is willing to vouch for them.

lol reddit isnt friction free anymore, most subs want you to wait weeks or months before you post.

Same story, no experience, need work for experience, can’t get work without experience.

A system like that sounds like it could be easily abused/manipulated into creating echo chambers of nothing but agreed-to right-think.

That would be only true if people only marked that they trust people that conform with their worldview.

which already happens with the stupid up/downvote system.

Where popular things, not right things, frequently get uplifted.

Well, I am on record saying that we should get rid of one-dimensional voting systems so I see your point.

But if anything, there is nothing stopping us from using both metrics (and potentially more) to build our feed.

Yeah, the up/down system is what prompted lots of bots to get created in the first place. because it leads to super easy post manipulation.

Get rid of it and go back to how web forums used to be. No upvotes, No downvotes, no stickers, no coins, no awards. Just the content of your post and nothing more. So people have to actually think and reply, rather than joining the mindless mob and feeling like they did something.

One argument in favor of bots on social media is their ability to automate routine tasks and provide instant responses. For example, bots can handle customer service inquiries, offer real-time updates, and manage repetitive interactions, which can enhance user experience and free up human moderators for more complex tasks. Additionally, they can help in disseminating important information quickly and efficiently, especially in emergency situations or for public awareness campaigns.

This reads like a chatgpt reply 😅

A ChatGPT reply is generally clear, concise, and informative. It aims to address your question or topic directly and provide relevant information. The responses are crafted to be engaging and helpful, tailored to the context of the conversation while maintaining a neutral and professional tone.

-

Make bot accounts a separate type of account so legitimate bots don’t appear as users. These can’t vote, are filtered out of post counts and users can be presented with more filtering option for them. Bot accounts are clearly marked.

-

Heavily rate limit any API that enables posting to a normal user account.

-

Make having a bot on a human user account bannable offence and enforce it strongly.

filtered out of post counts

Revolutionary. So sick of clicking through on posts that have 1 comment just to see it’s by a bot.

Exactly the reason I suggest it.

How do you make a bot register as a bot?

Points 2 and 3. Basically make restrictions on normal user accounts which are fine for humans but that will make bots swear and curse.

Unless you mean “what should the registration process be” I think API keys via a user account would do.

This. I’m surprised Lemmy hasn’t already done this, as it’s such a huge glaring issue in Reddit (that they don’t care about, because bots are engagement…)

-

Make your own bot account that randomly(or not randomly) posts something bots will reply to, a system based response preferably. Last I was looking at bots they were simply programs, and have dev commands that can return information on things like system resources, or OS version. Your bot posts commands built in from the bot apps Dev, the bots reply like bots do with their version, system resources, or whatever they have built in. Boom - Banned instantly.

Fundamentally the problem only has temporary solutions unless you have some kind of system that makes using bots expensive.

One solution might be to use something like FIDO2 usb security tokens. Assuming those tokens cost like 5€. Instead of using an email you can create an account that is anonymous (assuming the tokens are sold anonymously) and requires a small cost investment. If you get banned you need to buy a new fido2 token.

PS: Fido tokens still cost too much but also you can make your own with a raspberry pico 2 and just overwrite and make a new key. So this is no solution either without some trust network.

By being small and unimportant

Excellent. That’s basically my super power.

That’s the sad truth of it. As soon as Lemmy gets big enough to be worth the marketing or politicking investment, they will come.

Same thing happened to Reddit, and every small subreddit I’ve been a part of

just like me!

Ah the ol’ security by obscurity plan. Classic.

Definitely not reliable at all lol. I just don’t know how we’re gonna deal with bots if Lemmy gets big. My brain is too small for this problem.

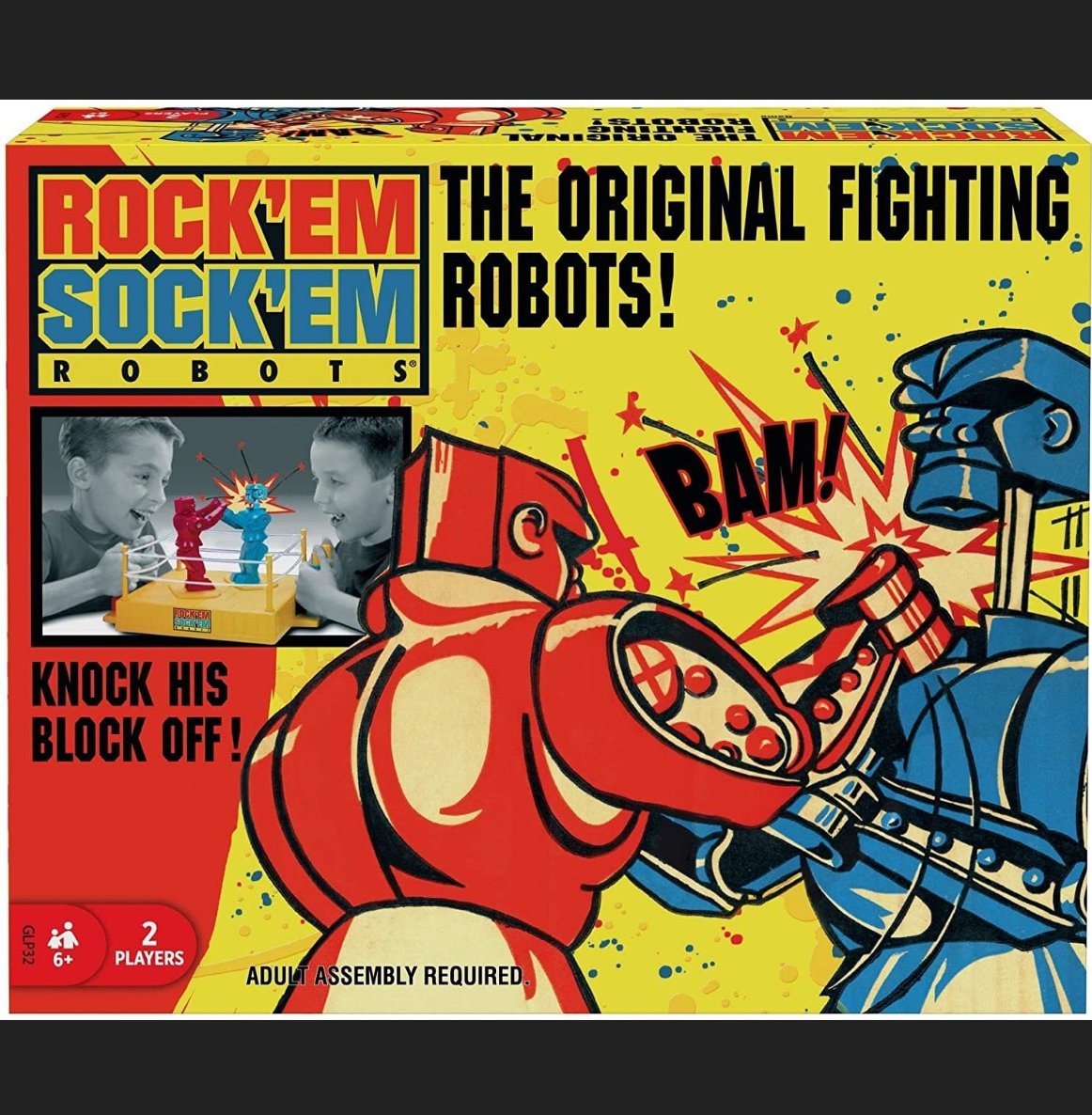

Create a bot that reports bot activity to the Lemmy developers.

You’re basically using bots to fight bots.

Love that name too. Rock 'Em Sock 'Em Robots.

While a good solution in principle, it could (and likely will) false flag accounts. Such a system should be a first line with a review as a second.

It’s reporting activity, not banning people (or bots)

Are you willing to sift through all the reports?

Cause that’s gunna be A LOT of work

Internet is not a place for public discourse, it never was. it’s the game of numbers where people brigade discussions and make it confirm to their biases.

Post something bad about the US with facts and statistics in US centric reddit sub, youtube video or article, and see how it divulges into brigading, name calling and racism. Do that on lemmy.ml to call out china/russia. Go to youtube videos with anything critical about India.

For all countries with massive population on the internet, you’re going to get bombarded with lies, delfection, whataboutism and strawman. Add in a few bots and you shape the narrative.

There’s also burying bad press with literally downvoting and never interacting.

Both are easy on the internet when you’ve got the brainwashed gullible mass to steer the narrative.

Just because you can’t change minds by walking into the centers of people’s bubbles and trying to shout logic at the people there, doesn’t mean the genuine exchange of ideas at the intersecting outer edges of different groups aren’t real or important.

Entrenched opinions are nearly impossibly to alter in discussion, you can’t force people to change their minds, to see reality for what it is even if they refuse. They have to be willing to actually listen, first.

And people can and do grow disillusioned, at which point they will move away from their bubbles of their own accord, and go looking for real discourse.

At that point it’s important for reasonable discussion that stands up to scrutiny to exist for them to find.

And it does.

I agree. Whenever I get into an argument online, it’s usually with the understanding that it exists for the benefit of the people who may spectate the argument — I’m rarely aiming to change the mind of the person I’m conversing with. Especially when it’s not even a discussion, but a more straightforward calling someone out for something, that’s for the benefit of other people in the comments, because some sentiments cannot go unchanged.

Did you mean unchallenged? Either way I agree, when I encounter people who believe things that are provably untrue, their views should be changed.

It’s not always possible, but even then, challenging those ideas and putting the counterarguments right next to the insanity, inoculates or at least reduces the chance that other readers might take what the deranged have to say seriously.

Well, unfortunately, the internet and especially social media is still the main source of information for more and more people, if not the only one. For many, it is also the only place where public discourse takes place, even if you can hardly call it that. I guess we are probably screwed.